These tools are often quite powerful, but are designed for enterprise contexts and sometimes come with data storage or subscription fees. Scrapy and Beautiful Soup: Python librariesĭesktop Applications: Downloading one of these tools to your computer can often provide familiar interface features and generally easy to learn workflows.Some popular tools designed for web scraping include:

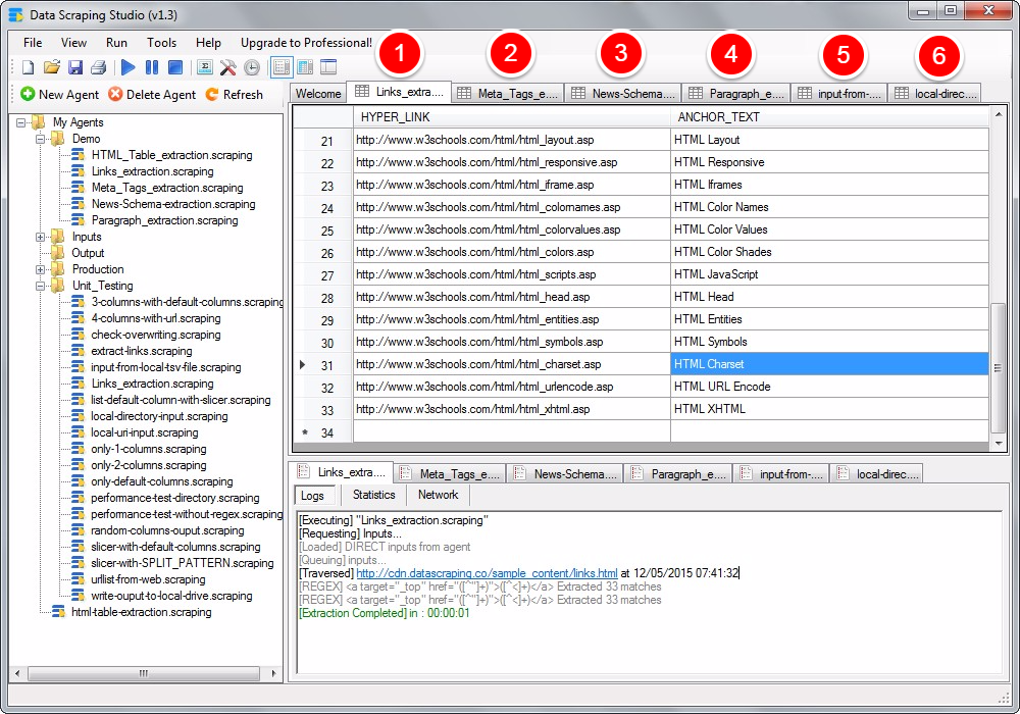

It’s important to remember that to set up and use these tools, you don’t always need to be a programming expert and there are often tutorials that can help you get started. These tools require more up front learning, but once set up and going, are largely automated processes. Programming Languages: For large scale, complex scraping projects sometimes the best option is using specific libraries within popular programming languages. Web Scraper.io: Available for Chrome and Firefox.Plug-ins often require more manual work in that you, the user, are going through the pages and selecting what you want to collect. Some tools require subscription fees, but many are free and open access.īrowser Plug-in Tools: these tools allow you to install a plugin to your Chrome or Firefox browser. The features and capabilities of web scraping tools can vary widely and require different investments of time and learning. Web scraping tools can range from manual browser plug-ins, to desktop applications, to purpose-built libraries within popular programming languages. The most crucial step for initiating a web scraping project is to select a tool to fit your research needs. These tags are designed to help text appear in readable ways on the web and like web browsers, web scraping tools can interpret these tags and follow instructions on how to collect the text they contain. Most text, though, is structured according to HTML or XHTML markup tags which instruct browsers how to display it. Some of that text is organized in tables, populated from databases, altogether unstructured, or trapped in PDFs. Please be advised that if you are collecting data from web pages, forums, social media, or other web materials for research purposes and it may constitute human subjects research, you must consult with and follow the appropriate UW-Madison Institutional Review Board process as well as follow their guidelines on “ Technology & New Media Research ”. If you are interested in identifying, collecting, and preserving textual data that exists online, there is almost certainly a scraping tool that can fit your research needs. But researchers also use web scraping to perform research on web forums or social media such as Twitter and Facebook, large collections of data or documents published on the web, and for monitoring changes to web pages over time. Companies use it for market and pricing research, weather services use it to track weather information, and real estate companies harvest data on properties. There are many applications for web scraping. So, instead of massive unstructured text files, you can transform your scraped data into spreadsheet, csv, or database formats that allow you to analyze and use it in your research. Most web scraping tools also allow you to structure the data as you collect it. Unlike web archiving, which is designed to preserve the look and feel of websites, web scraping is mostly used for gathering textual data. Also like web archiving, web scraping can be done through manual selection or it can involve the automated crawling of web pages using pre-programmed scraping applications. Like web archiving, web scraping is a process by which you can collect data from websites and save it for further research or preserve it over time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed